In this article

After spending over $45M annually in paid social, we’ve learned one thing: growth comes from structured testing.

We run performance marketing for dozens of brands, and every one of them comes to us with aggressive growth goals. The only reliable way to hit those goals is through rigorously testing your images, videos, copy, and landing pages.

And while many brands obsess over visuals, ad copy is often the highest-leverage variable to test. Testing it can help uncover what actually persuades your audience—insights you can then use to raise your ads’ baseline performance over time.

Why is copy testing so important

Before we get into the actual testing process, it helps to understand what exactly copy does for an ad campaign. While visuals generally stop the scroll, copy is what wins the click and the conversion. It’s the part of the ad that names the problem, explains your product’s value, and gives someone a reason to act.

Without ongoing copy testing, brands risk creative fatigue, declining performance, and wasted ad spend. The message stops feeling fresh or specific to your audience.

The purpose of testing your ad copy is to create ads that outperform what’s already working. Every campaign has a benchmark, a “control” ad that sets the performance standard. The goal of copy testing is to consistently and systematically beat that control.

In other words, ad copy testing helps turn messaging into a controllable performance lever.

How to run an ad copy test in 6 steps

Effective copy testing involves more than swapping headlines and seeing how performance changes. The best tests run like the scientific method: define what you’re trying to learn, control what changes, and use the results to shape your next hypothesis.

Below are six steps on how to run an ad copy test that generates meaningful results.

1. Define the objective and funnel stage

Start with a single objective and primary metric. If you don’t decide in advance what success means, it’s easy to cherry-pick a result after the fact or optimize toward the wrong outcome.

Here are a couple examples of objectives and their corresponding metrics:

- Identify the value prop that resonates best by looking at conversion rate

- Test how offer framing (e.g., “Save 50%” vs. “Half Off”) affects cost per acquisition (CPA)

- See if a shorter headline length improves click-through rate (CTR)

Avoid vague objectives like “make the copy better.” Your test should end with a definitive decision: whether to keep, kill, or iterate on a particular ad variation.

Defining the objective of your ad copy test forces you to think about where in the funnel the message operates. Are you speaking to cold prospects who need education? Or warm audiences who need reassurance and urgency? The goal of the copy should match the stage of awareness.

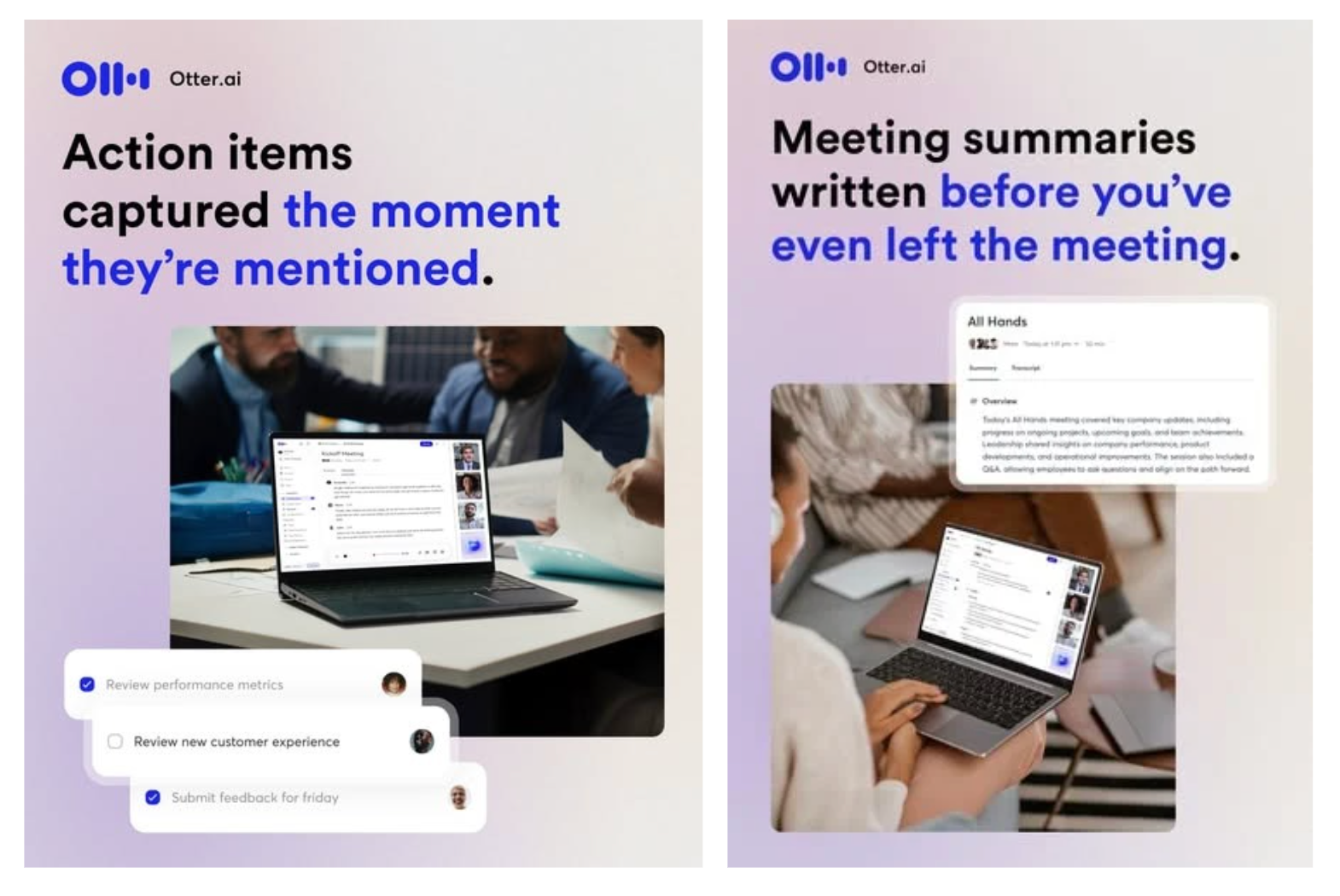

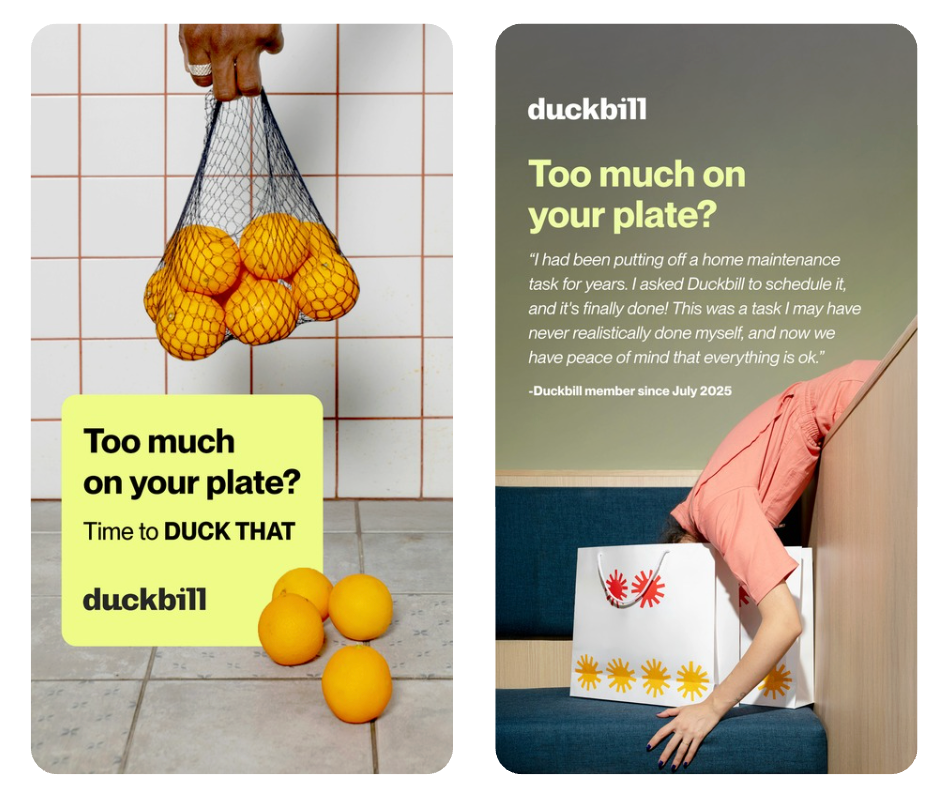

The same headline can serve different psychological purposes depending on the funnel stage. For example, take a look at these two ads from the AI executive assistant company Duckbill.

At the top of the funnel, “Too much on your plate?” functions as a pattern interrupt—what you see in the ad on the left. It creates instant relatability, and the job of the copy is awareness.

But for the bottom of the funnel, the audience likely already recognizes the headline and understands the problem. They need reassurance. In the ad on the right, Duckbill includes a testimonial to support the same “Too much on your plate?” headline. This shifts the copy from introducing the idea to validating it, saying “Here’s proof that this works” instead of “Here’s the problem.”

Once you know what you’re trying to learn, the next step is designing a test around your hypothesis.

2. Define your testing methodology

Testing doesn’t translate perfectly across platforms. A message that wins on Meta won’t necessarily win on Google or LinkedIn because user intent and creative norms are different. You need to design your test for the platform you’re running it on.

You’ll typically choose between two testing approaches:

- A/B testing: Also known as “split testing,” this method compares two versions of a single variable (e.g., two different headlines) while everything else remains the same.

- Multivariate testing: This method compares multiple variables simultaneously (e.g., a change in headline and CTA) so that you’re effectively comparing combinations.

A/B testing is the cleanest and most precise way to learn because you’re focusing on one variable. However, because multivariate tests involve changing several elements at once, it may be more efficient than running multiple sequential A/B tests.

Different circumstances call for different tests. A quick rule of thumb:

- Use A/B testing when you have limited traffic, need rapid results, or are making big changes to your ad text.

- Use multivariate testing when you have significant traffic and want to optimize top-performing ads by testing different combinations of text.

3. Create different variations of copy

With your objective clearly defined, it’s time to get creative (our favorite part).

Instead of rewriting an entire ad five times, isolate the variable that matters. When testing copy, your variations might focus on changes in:

- Headlines

- Angles (problem-focused vs. benefit-driven vs. social proof)

- Value propositions

- Objection-handling statements

- Calls to action

For example, if your hypothesis is that urgency increases conversions, you might test:

- “Book today—limited availability”

- “Don’t put it off another month.”

- “Spots are filling fast.”

It’s the same offer for the same audience, but a different psychological trigger and framing.

A good copy variation should be meaningfully different, not just a synonym swap. If you can’t explain what psychological lever you’re pulling (like clarity, urgency, proof, or objection-handling), it’s probably too minor to teach you anything.

For more inspiration on copy variations to test, check out our blog post about ad copy templates.

4. Create a creative testing survey

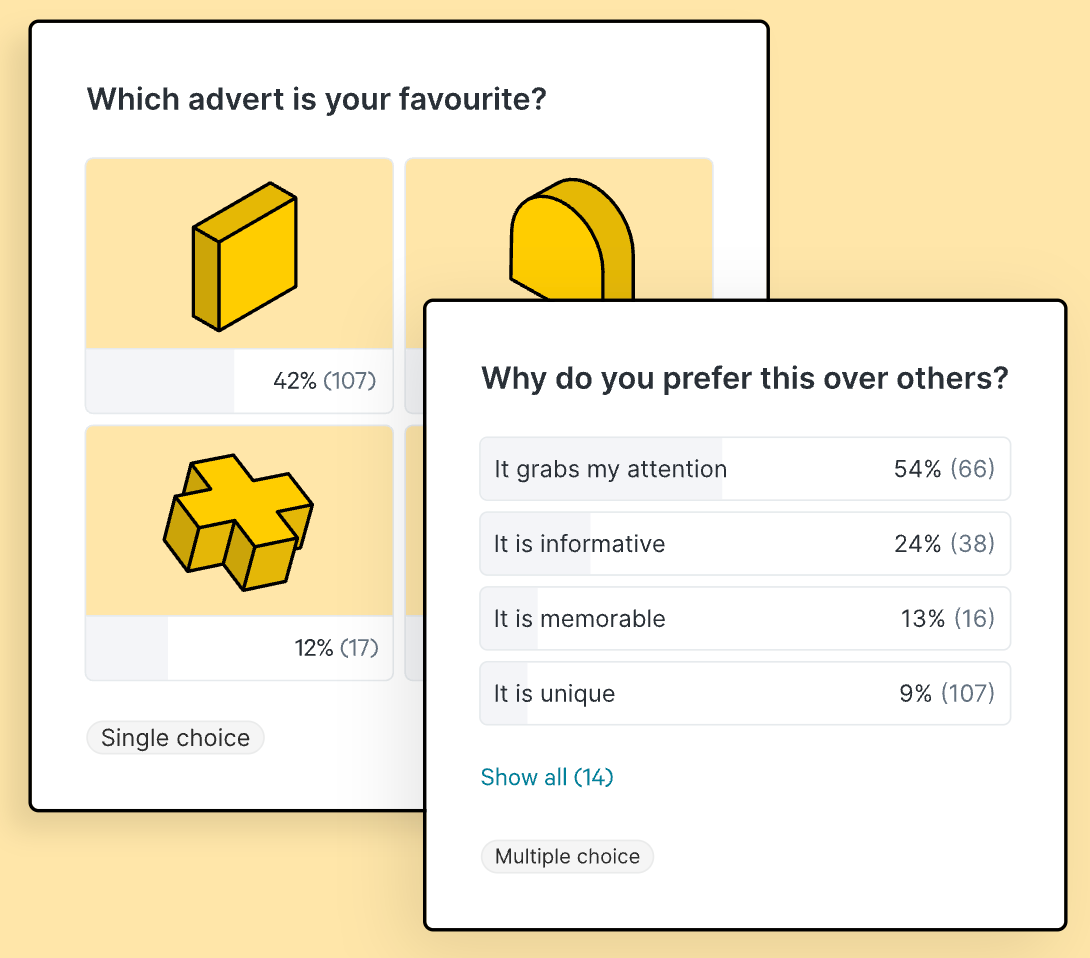

This is a step that many performance marketers skip: creating a creative testing survey. This involves surveying your existing audience to get their thoughts on your ad creatives before putting any money into an ad platform.

While this step isn’t mandatory, it gives you insights you might not uncover through regular in-platform testing alone. Instead of spending a budget to discover that your messaging would flop, you can identify weaknesses early—before the ad ever enters the auction.

Think of a creative testing survey as a pre-launch filter. Live performance data tells you what works, but a survey provides a bigger picture of why something might or might not work. It offers more granularity and qualitative insights than an A/B or multivariate test can provide on its own.

You can structure your survey using the following methods:

- Multiple choice questions: Present two or more copy variations and ask respondents which one they prefer. This works well for testing hooks, headlines, or CTAs.

- Open-ended questions: Encourage respondents to explain what they liked or disliked about the message. Qualitative insights often reveal objections or confusion you wouldn’t have predicted.

- Rating scales: Ask participants to rate clarity, credibility, or appeal on a scale. This makes it easier to compare variations quantitatively.

When your survey is ready to go, choose how you'll reach your respondents in a way that's representative of your target consumers. For instance, use your email lists, create a survey on X, or use professional software like Zappi or Quantilope to get more accurate results.

Not sure when it’s worth using this method? Survey-based copy testing is especially valuable when:

- you’re launching a new product

- repositioning your brand

- entering a new market

5. Launch your test

When your variations are ready, it’s time to launch your test.

How long should your test run? We recommend:

- Give your tests a minimum of 3 to 7 days. New ads often perform differently in the first 48 hours, before the ad platform’s algorithms stabilize.

- For more reliable results, 7 to 14 days is ideal. This gives the ad platform time to gather more data.

- Smaller accounts may have to run their tests for longer periods to see meaningful results.

It’s also worth considering the specific ad platform you’re running your test on. For instance, Meta recommends running tests for a minimum of 7 days.

Once your test is live, monitor delivery to ensure each variation is receiving fair spend, and resist the urge to make changes too early.

6. Analyze the results and adjust your campaigns accordingly

After a sufficient amount of time has passed, your role shifts from creator to analyst. Remember that your job isn’t just to pick a winner: it’s to extract an insight you can build on.

Analyze the test results according to the metric(s) you defined alongside your objective. Did the test confirm or disprove your hypothesis?

Every test reveals something about your audience:

- What they care about

- What they ignore

- What they trust

- What makes them act

If you ran a survey beforehand, this is where you can connect the dots. Compare perceived performance (what respondents said would resonate) with actual performance (what drove clicks and conversions). For example:

- Did the variation people said they preferred also perform best live?

- Did qualitative feedback highlight objections that later showed up in your conversion rate?

Survey insights provide directional understanding while live ad data provides behavioral proof. Together, they give you a clearer picture of what’s working and why.

Wrapping up

Effective ad copy testing is a structured and iterative process, not a one-time tactic. As consumer preferences evolve over time, it’s important to keep testing your ad copy to identify what actually resonates with consumers. Otherwise, your messaging will lose relevance and fail to win over customers.

At Primer, testing ad copy is a central part of our performance growth system. If you want help running copy tests that produce clear learnings, reach out. We can audit your current messaging, design the next round of tests, and help you turn results into a repeatable testing system.

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)

.avif)